In October 2024, TD Bank agreed to pay over $3 billion in penalties for systemic failures in its anti-money laundering program. Largest penalty of its kind ever imposed on a U.S. bank. But the number that should really bother you is this one: 92% of the bank's total transaction volume went unmonitored between 2018 and 2024. That's $18.3 trillion in activity that no automated system ever looked at.

The industry conversation has focused on governance failures, budget decisions, and compliance culture, and rightly so. But underneath all of that is a story about data architecture. One that matters well beyond a single bank's AML program.

Data Silos Are Organizational Risk

There's an old observation in software engineering called Conway's Law: the systems an organization builds will mirror its communication structures. Data does the same thing. Your data architecture is a map of your organization, and the boundaries in that map are where risk concentrates.

TD Bank's compliance program wasn't just underfunded. It was fragmented in ways that mirrored the org chart. The person responsible for compliance oversight didn't have authority over the team running the monitoring technology. Entire categories of transactions (automated transfers, most check activity, newer payment products) never made it into the monitoring system. Not because someone assessed them and decided they were low risk. Because the system was never updated to ingest them.

From 2014 to 2022, the bank introduced new products, grew substantially, and added zero new monitoring scenarios to its transaction monitoring. Eight years. Zero scenarios.

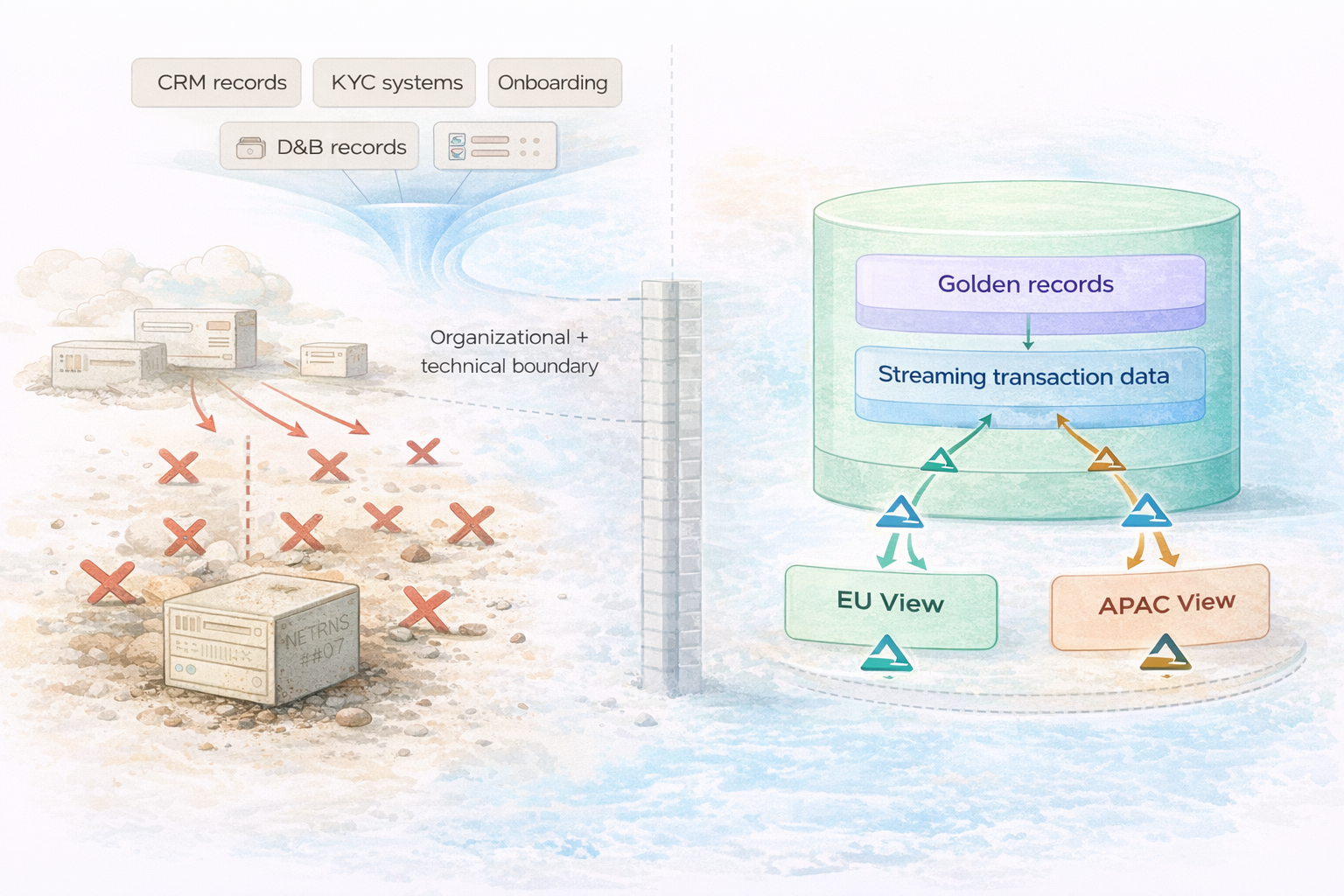

Every one of those gaps was a data silo. The monitoring system couldn't see ACH transactions. Not because the data didn't exist, but because it lived on the other side of an organizational and technical boundary that nobody bridged. That single blind spot contributed to a $3 billion enforcement action.

Data silos don't just represent technical boundaries you can solve with better integration tooling. They represent policy boundaries. Places where communication breaks down. Where accountability gets murky. Where risk compounds in silence. Regulators are starting to see it this way. FinCEN's enforcement against TD Bank included, for the first time, a mandatory data governance review. Not just policies and people, but the underlying data infrastructure itself.

Now Multiply That Across Borders

TD Bank operated primarily in the U.S. and Canada. Think about what this structural problem looks like for an institution operating under the regulations of five or six countries simultaneously.

Every jurisdiction defines its compliance requirements a little differently. Who counts as a beneficial owner? In most of Europe, it's anyone holding 25% or more of a company. Some regulators look at "significant influence" regardless of percentage. How often do you re-verify your highest-risk customers? Annually in the EU. Tied to internal risk rating elsewhere, with different criteria for what "high risk" even means.

A single corporate customer might be subject to all of these regimes at once. London's compliance team needs one view of that customer. Singapore needs a different one. New York needs yet another. But those views also have to reconcile with each other, because regulators increasingly expect global consistency, not just local compliance in isolation.

If you squint at this long enough, you realize it's a data architecture problem wearing compliance clothing. Each jurisdiction's compliance function becomes its own silo, with its own systems and its own version of the customer. And the boundaries between those silos are exactly where risk hides.

Traditional MDM platforms, the ones running as standalone SaaS applications disconnected from the broader data platform, can master the data. But they can't break down the silos. To do that, your MDM has to live where your data lives.

The SaaS MDM Ceiling

Most Master Data Management platforms create a single "golden record." One canonical, trusted version of each customer or business entity. Reasonable starting point. But under multi-regime pressure, the architecture of traditional SaaS MDM actively works against you.

Start with data lock-in. When your golden record lives inside a closed SaaS application, every downstream consumer (compliance workflows, regulatory reports, risk calculations) needs an extract. Each extract is a snapshot that starts going stale the moment it's created. The chain of custody breaks at the extraction boundary. Same pattern that cost TD Bank $3 billion, just replicated at the application level.

Then there's scale. A large financial institution processes hundreds of millions of transactions per day. Payments, trades, transfers, settlement activity. Terabytes of data that compliance needs to evaluate against customer risk profiles. Traditional SaaS MDM platforms were never designed for this volume. API rate limits. Batch processing windows. Architectural constraints that cap throughput. Your mastered customer data lives in one system. The transaction data compliance needs to monitor lives somewhere else. The MDM platform can't bridge that gap.

So the two questions that most need to be answered together ("who is this entity?" and "what is this entity doing right now?") end up structurally separated. Compliance teams assemble a composite picture from batch extracts. The seams between those extracts are the blind spots that go undetected until they become regulatory findings.

LakeFusion: MDM Native to Databricks

This is where we (disclosure: I'm the Chief Architect) think the architecture has to go.

LakeFusion is a Master Data Management platform built natively on Databricks. Not integrated with the lakehouse. Native to it. When LakeFusion masters an entity, the golden record is a Delta table, governed by Unity Catalog, living in the customer's own lakehouse. SQL queries, PySpark pipelines, Delta Live Tables, ML models, BI tools, custom applications: they all access mastered data directly. No extraction step. No integration seam where data quality degrades or audit trails break.

What makes this matter for compliance: LakeFusion's golden records live in the same platform where your transaction data already lands. Or where it can arrive via Structured Streaming in near-real-time. The same Databricks environment that hosts your mastered entities can ingest terabytes of transaction data per day through Spark Streaming pipelines, landing in Delta tables right alongside the golden records. No API rate limits. No batch windows.

That compliance question I mentioned earlier ("what is this entity doing, and does it match their risk profile?") turns into a join between two sets of governed Delta tables. Not an exercise in assembling stale extracts. A Structured Streaming pipeline can continuously evaluate incoming transactions against LakeFusion's mastered customer attributes, flagging anomalies in real time on serverless compute.

Because LakeFusion writes mastered data as open Delta tables, the silo between "MDM" and "everything else" doesn't exist. That's what makes the next piece possible.

Regime-Specific Views from a Single Source of Truth

Instead of one golden record that every jurisdiction awkwardly shares, LakeFusion's mastered entities become the foundation for regime-specific downstream views, each materialized as a governed Delta table. Your EU compliance view of a customer carries the attributes, ownership thresholds, and review metadata the EU requires. Your Singapore view carries different attributes shaped to that country's expectations. Both derive from the same golden record. Both are versioned and governed through Unity Catalog. Both maintain a full audit trail back to their original sources.

In practice, these are Delta Live Tables pipelines sitting on top of the golden record tables LakeFusion writes. No export step. No secondary transformation layer. No gap in chain of custody.

Third-party enrichment fits here too. LakeFusion's native integration with Dun & Bradstreet pulls corporate ownership hierarchies, firmographic data, and risk signals directly into the mastering flow. That enrichment data arrives as governed attributes on the golden record, with the same lineage and freshness as every other mastered attribute. When a compliance workflow needs to verify a customer's corporate ownership structure, it's working with D&B data that came through LakeFusion's governed pipeline. Not a stale quarterly file from a separate vendor portal.

Data Architecture Is Business Risk

FinCEN's data governance review requirement in the TD Bank case is a signal. International banking standards already require banks to produce accurate, complete, reconciled risk views within hours during a stress event. The EU's new pan-European AML authority will enforce consistent standards across member states. Cross-regime reconcilability is going to become an examination topic.

But regulation is the forcing function, not the point. The point is that data architecture is a category of business risk, and most organizations don't treat it that way.

Data silos are boundaries where oversight weakens. SaaS platforms that lock your data behind API rate limits become chokepoints where scale constraints turn into compliance constraints. Extraction pipelines are seams where freshness degrades and lineage breaks. We're used to treating these as technical debt. They're operational risk.

We built LakeFusion to eliminate these boundaries. Because it's native to Databricks, not bolted on through connectors and extracts, it erases the silo between MDM and the rest of your data platform. Mastered entities, D&B enrichment, streaming transaction activity, regime-specific compliance views, analytical workloads: all in the same governed environment. Delta tables for reliability. Structured Streaming for real-time ingestion. Delta Live Tables for materialized downstream views. Unity Catalog for governance. Serverless compute for elastic scale. LakeFusion for the mastering, matching, enrichment, and stewardship that makes it all trustworthy.

TD Bank's $3 billion lesson wasn't that compliance is expensive. It was that fragmented data architecture compounds silently until it doesn't.

Roz King is Chief Architect at LakeFusion AI, a Master Data Management platform built natively on Databricks. LakeFusion brings enterprise MDM (entity resolution, native Dun & Bradstreet enrichment, governed data stewardship, and flexible data modeling) directly into the data lakehouse, eliminating the silos between mastered data and the rest of your analytical and operational workloads.

.avif)