Your data already has relationships. lakegraph makes them queryable — without moving a single byte.

Model

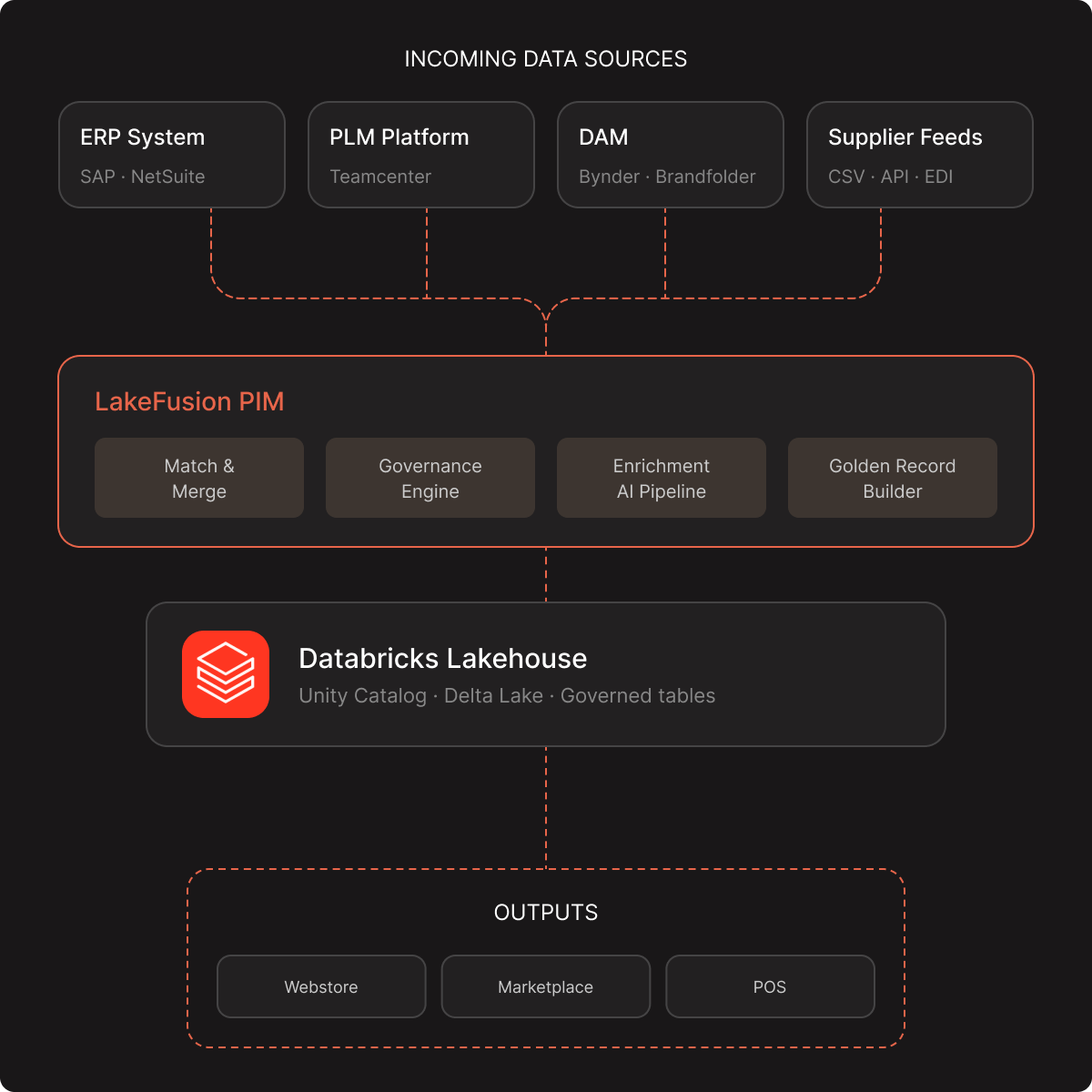

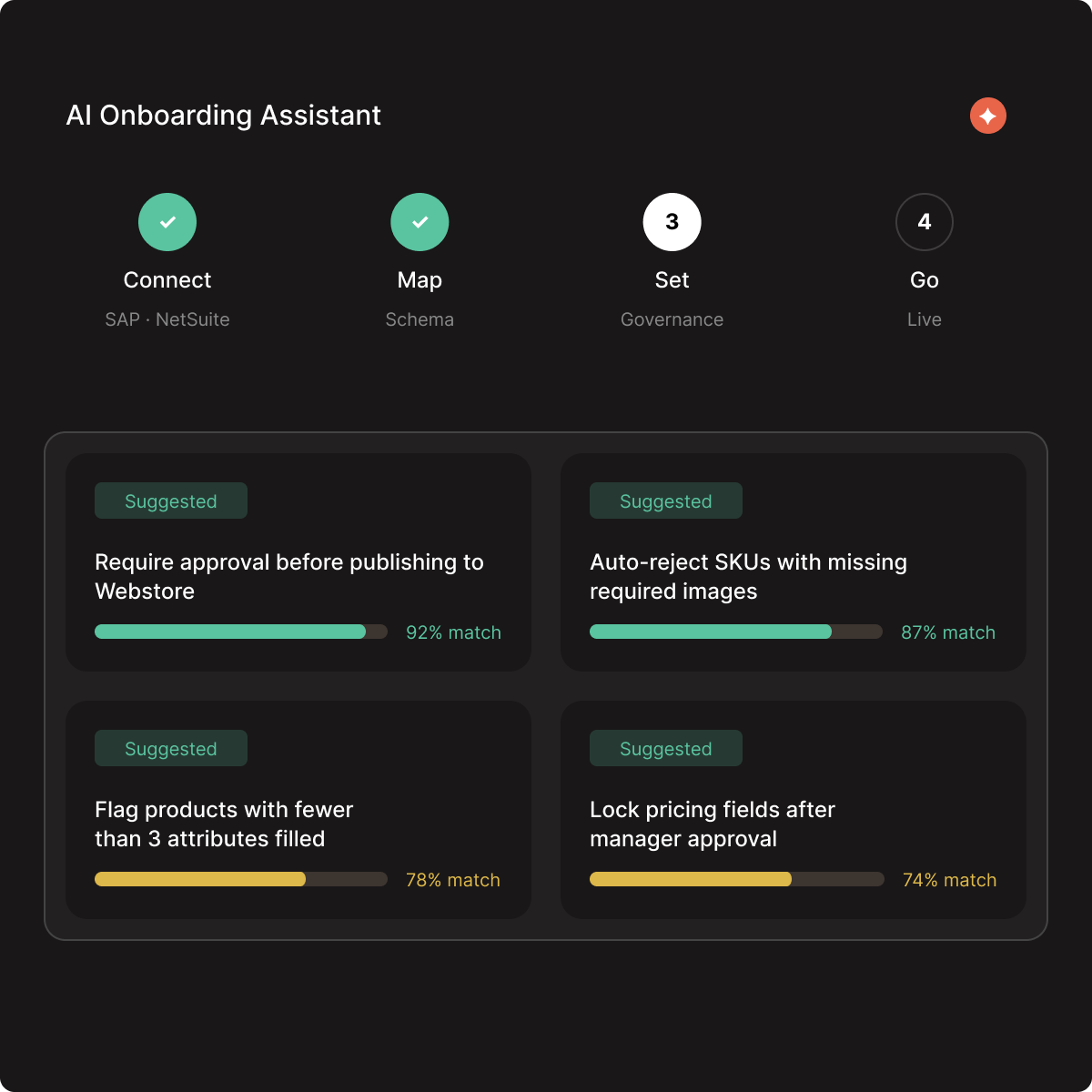

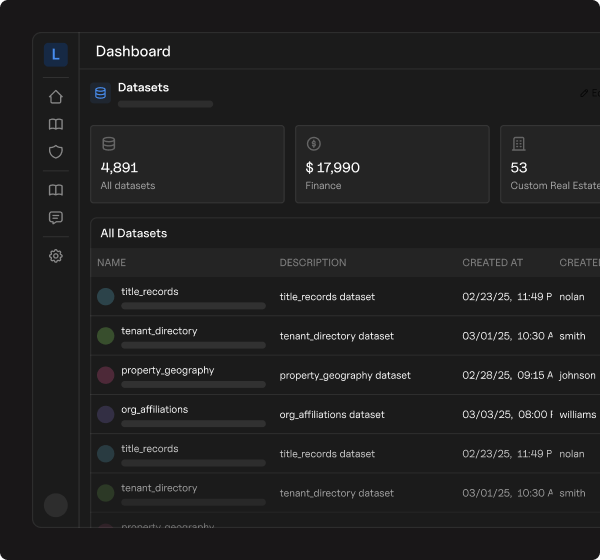

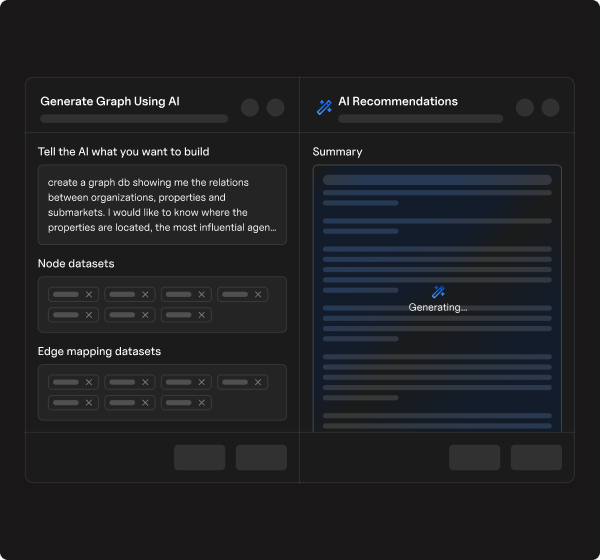

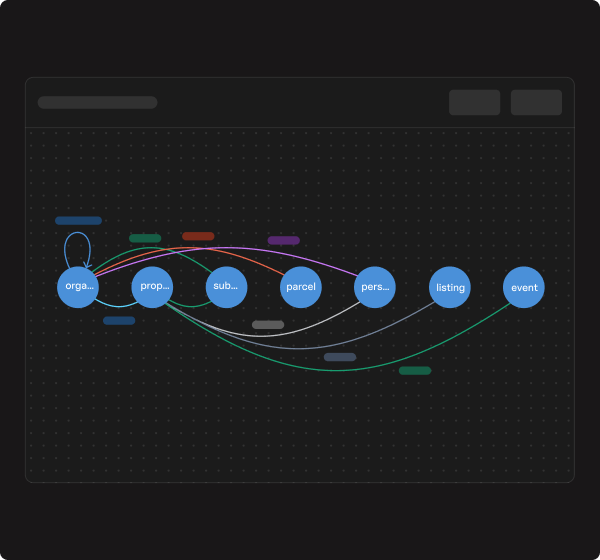

Point lakegraph at your delta lake tables. ai discovers entities, infers relationships, and builds a persistent graph index — powered by liquid clustering for instant lookups.

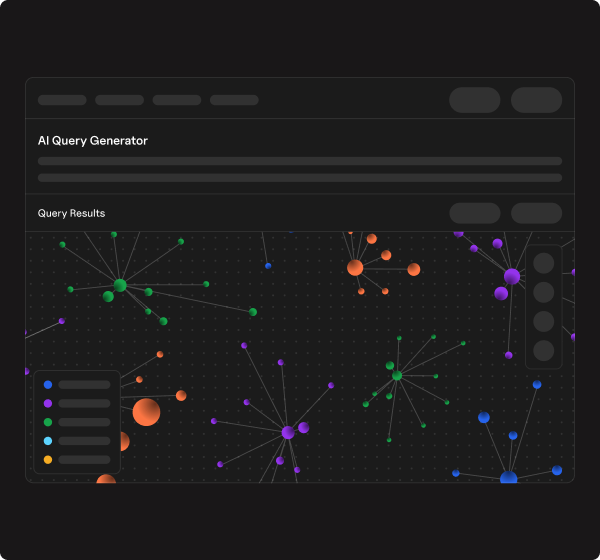

Query

Run 1-to-5 hop traversals in seconds via lakebase — with pre-computed adjacency lists and three-layer caching. no spark cluster cold starts.

Operate

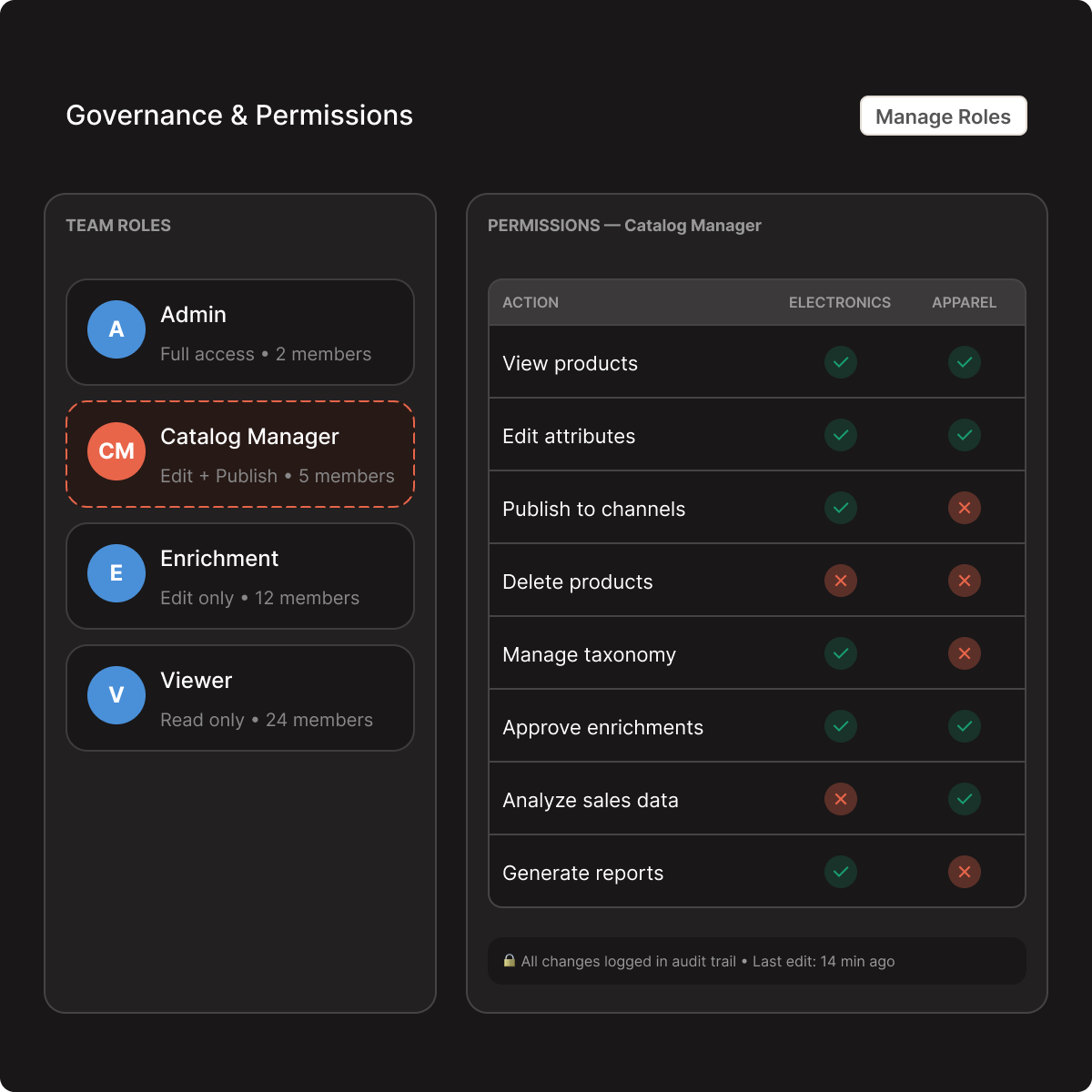

Feed graph features directly into databricks ml pipelines, bi dashboards, and real-time applications — all governed by unity catalog.